HED

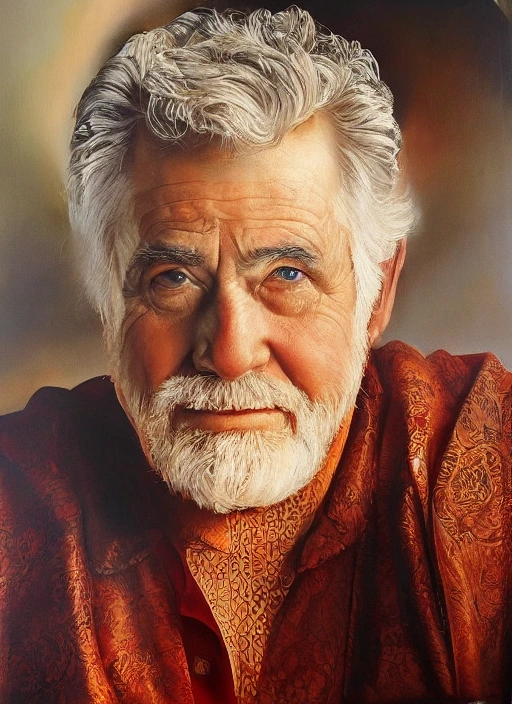

Utilize Holistically-nested Edge Detection (HED) to generate images with precise structural control, preserving soft edges and intricate details while allowing complete creative freedom over style and content.

Overview

HED is a image - controlled generation model available on the GenVR platform. Utilize Holistically-nested Edge Detection (HED) to generate images with precise structural control, preserving soft edges and intricate details while allowing complete creative freedom over style and content.

Key Features

- Soft edge detection preserving fine details like hair, fur, and fabric textures

- ControlNet-compatible conditioning for Stable Diffusion and SDXL models

- Holistic nested architecture capturing multi-scale structural information

- Superior detail retention compared to traditional Canny edge detection

- Real-time edge map extraction from photographic or illustrated references

- Structural composition locking with flexible style transformation

- Anti-aliased edge handling for smoother, more natural transitions

- Cross-domain synthesis maintaining spatial relationships across styles

Popular Use Cases

- Converting hand-drawn sketches or rough concepts into fully rendered, detailed artwork

- Style transfer applications preserving original composition while changing artistic genre completely

- Virtual staging and interior redesign visualization for real estate and retail

- Character wardrobe changes and outfit visualization while maintaining identical body positioning

- Consistent character generation across diverse environmental contexts and lighting conditions

Best For

- Character design requiring consistent poses across multiple style variations

- Architectural visualization and interior design rendering

- Fashion photography and apparel visualization on consistent models

- Portrait generation with precise facial structure preservation

- Complex multi-subject scenes requiring spatial relationship maintenance

Limitations to Keep in Mind

- Requires reference images with reasonably clear edge definition; extremely blurry inputs produce ambiguous guidance

- May over-interpret soft shadows or gradients as structural edges in low-contrast regions

- Computational overhead higher than simple Canny edge detection due to deep network processing

- Struggles with highly abstract, surreal, or non-representational source imagery lacking clear contours

- Potential edge bleeding when transferring to extremely divergent styles with different structural conventions

Why Choose This Model

- Superior Detail Preservation: Captures delicate structures like hair strands, fur texture, and fabric weaves that rigid edge detectors often miss or oversimplify.

- Structural Consistency: Maintains exact subject pose, proportions, and spatial composition while enabling dramatic style transformations.

- Soft Edge Detection: Produces natural, gradient-aware edges that create more organic and photorealistic results compared to binary edge maps.

- Versatile Model Compatibility: Works seamlessly across SD 1.5, SDXL, and custom diffusion model variants without architectural changes.

- Intuitive Control: Eliminates complex prompt engineering requirements for maintaining subject structure and spatial layout.

- Enhanced Realism: Preserves subtle edge variations and tonal gradients essential for convincing photographic outputs.

- Architectural Precision: Excels at maintaining straight lines, geometric forms, and perspective in built environments and product design.

- Artistic Flexibility: Enables complete genre shifts—from photography to illustration to 3D render—while keeping compositional integrity intact.

- Reduced Visual Artifacts: Minimizes edge bleeding, halos, and ringing effects common in hard-threshold detection methods.

- Workflow Efficiency: Removes need for manual masking, layering, or inpainting to preserve subject boundaries during generation.

- Multi-Scale Processing: Simultaneously handles fine surface details and broad structural elements in a single inference pass.

- Pose Transfer Capability: Perfect for applying new outfits, environments, or lighting to existing character poses without distortion.

- Noise Robustness: Performs reliably on compressed, low-resolution, or slightly noisy source images compared to gradient-based methods.

- Semantic Edge Understanding: Distinguishes between important structural edges and texture noise through learned hierarchical features.

Alternatives on GenVR

- Pose

- Z Image Controlnets

- Canny

Pricing

Billed through GenVR credits

Properties

Customizable parameters available for this model.

Required

Prompt for the model

Input image

Optional

eta (DDIM)

Seed

Guidance Scale

Added Prompt

Negative Prompt

GenVR Visual App

Experience the power of HED through our intuitive visual interface. Experiment with prompts, adjust parameters in real-time, and download your results instantly.

Launch AppDeveloper API Docs

Integrate this model into your own applications. Access enterprise-grade performance, scalable infrastructure, and detailed documentation for rapid deployment.

Explore APIMore in Image - Controlled Generation

Discover other high-performance models in the same category as HED.